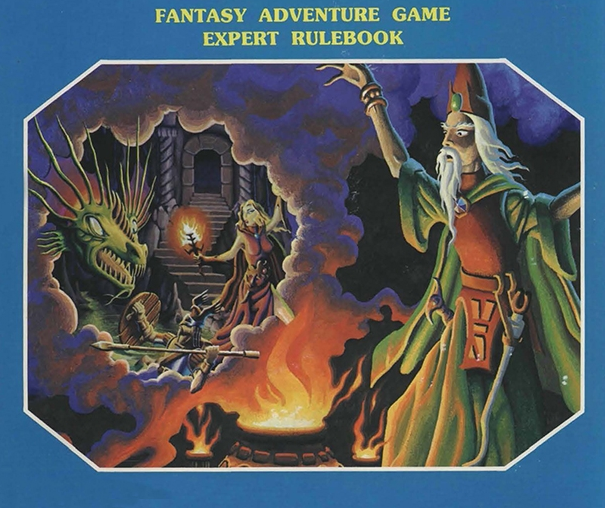

Wizards of Like

The News Feed algorithm is made to serve ads, not please users

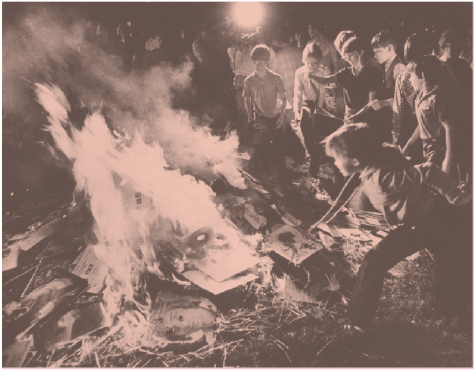

A year ago, when the emotional manipulation paper became a scandal, it became clear that many Facebook users hadn’t before considered how their News Feeds were manipulated; they were inclined to instinctively accept the feed as a natural flow. That seems to reflect consumers’ attitude toward television, where content simply flows from channels and we don’t generally stop to think about the decisions that led to that content being there and how it might have been different. We just immerse in it or change the channel; we don’t try to commander the broadcasting tower. Social media’s advent was supposed to do away with central broadcast towers, but it hasn’t really turned out that way. Instead mass media companies distribute their products through the platforms and consumers’ role is to boost the signal for them. The fact that consumers create content… Read More...